What is online marketing?

Online marketing is the process of using a variety of digital marketing techniques to reach a specific audience. Common techniques include content marketing, search engine optimization, social media, online video, email marketing, and digital advertising, to name just a few.

These techniques work best when they work together. For example, when you publish educational content on your firm’s blog you can increase its visibility by incorporating keywords that prospective buyers use in Google to learn about services like yours. You might also promote your post on social media, expanding your reach. You might then summarize key points in a YouTube video, providing yet another way to reach interested parties. If it’s successful, this coordinated effort can drive new traffic to your website and introduce interested, qualified prospects to your services and expertise. At its best, online marketing is a complex ecosystem of tools and techniques that can help your firm achieve greater visibility and credibility.

Since we published our book Online Marketing for Professional Services in 2012, the changes that we observed in the way professional services are bought and sold have come to full fruition. Today, most firms look online to build their reputations, network, and generate leads. Referrals and face-to-face interactions with prospects will alway be important components of marketing and business development, but increasingly they play a supporting role.

Just look at LinkedIn. In 2016, LinkedIn had 467 million users. Today, they number over a billion. And according to Hinge’s ongoing research in professional services, LinkedIn is by far professionals’ preferred social media platform. As more and more of professional life moves online, the firms that are most successful will be those that master online marketing.

So what are ten of the top advantages of online marketing, and how can you bring those benefits to your firm? We explore those next.

Advantages of Online Marketing

1. Online marketing gives you many ways to demonstrate and build expertise

For professional services buyers, the single most important factor in selecting a provider is expertise. Often, the challenge lies not in acquiring the requisite talent, but in projecting that expertise to the marketplace.

Your website can be a powerful platform to amplify your expertise. For instance, it can be a convenient place to house your growing library of valuable educational content—content you produce on topics relevant to your target audience. Using crosslinks and a carefully conceived offer strategy, you can point readers to additional resources that are relevant to their interests, along the way enticing them to exchange a small amount of personal information for in-depth guides, whitepaper, and other long-format materials. In this way, you convert web visitors into contacts, who you can nurture over time using email and other techniques.

You can accomplish this sort of engagement offline, as well, but online tools make it much easier to reach a wide and relevant audience. Blogging, social media, and webinars all allow you to educate your audience on topics that matter to them, illustrating your expertise in the process.

2. Online marketing makes it easier to establish and build relationships

Online marketing allows you to create and develop new relationships at scale—something that simply isn’t possible any other way. Email marketing, keyword phrase targeting, online advertising, and other strategies can help you target a tailored message with laser precision to, say, the CIOs of the hundred largest businesses in your industry.

Beyond targeting messages, you can use online forums such as LinkedIn Groups to network and converse with other industry leaders. Online tools allow you to both meet new clients, colleagues, and influencers and strengthen relationships with those you already know.

3. You can target specific verticals or niches using online marketing

Just as you can build relationships in a targeted way, online marketing empowers you to target a highly specific vertical or niche, delivering your message to a wide audience that needs your services. You can do this relatively inexpensively by targeting keywords in educational blog posts, or participating in groups or using industry hashtags on social media. Online marketing allows you to zero in on a niche easily and efficiently.

4. Online marketing isn’t tied to geography or time zone

Online marketing techniques can be used in an asynchronous way, meaning your audience doesn’t have to be constrained by time or geography. To meet a potential client or contact in person, you have to travel and coordinate your schedules, with any expenses this may entail.

Speaking at industry events, for example, can be a powerful way to build your reputation—and it can be a valuable part of a diversified marketing program. But most professionals can’t afford the time and expense required to do frequent public speaking. They can, however, deliver a webinar to an audience of dozens or hundreds of people. Typically, these require significantly less preparation, and just an hour or so to produce—all from the comfort of their office or home.

Another advantage of this asynchronicity is that it empowers your audience to engage with your message on their own terms. They can absorb your firm’s expertise at their own pace, reading your blog, following you on social media and watching videos. When they’re ready to explore your services, they know exactly where to find you.

5. Online marketing can be less expensive

With online marketing, there are no travel costs, and you don’t have to pay for printing or postage. The cost of online storage, email and social media,, by contrast, are relatively low.

Some of your traditional advertising costs can be replaced by online marketing tools, as well—and these online tools usually “pull more weight” by integrating with the rest of your online marketing program. Guest posts on industry blogs or publications, for example, can drive traffic to your site, build your reputation, and fuel conversations on social media.

6. Online search is the second most common way people check out your firm

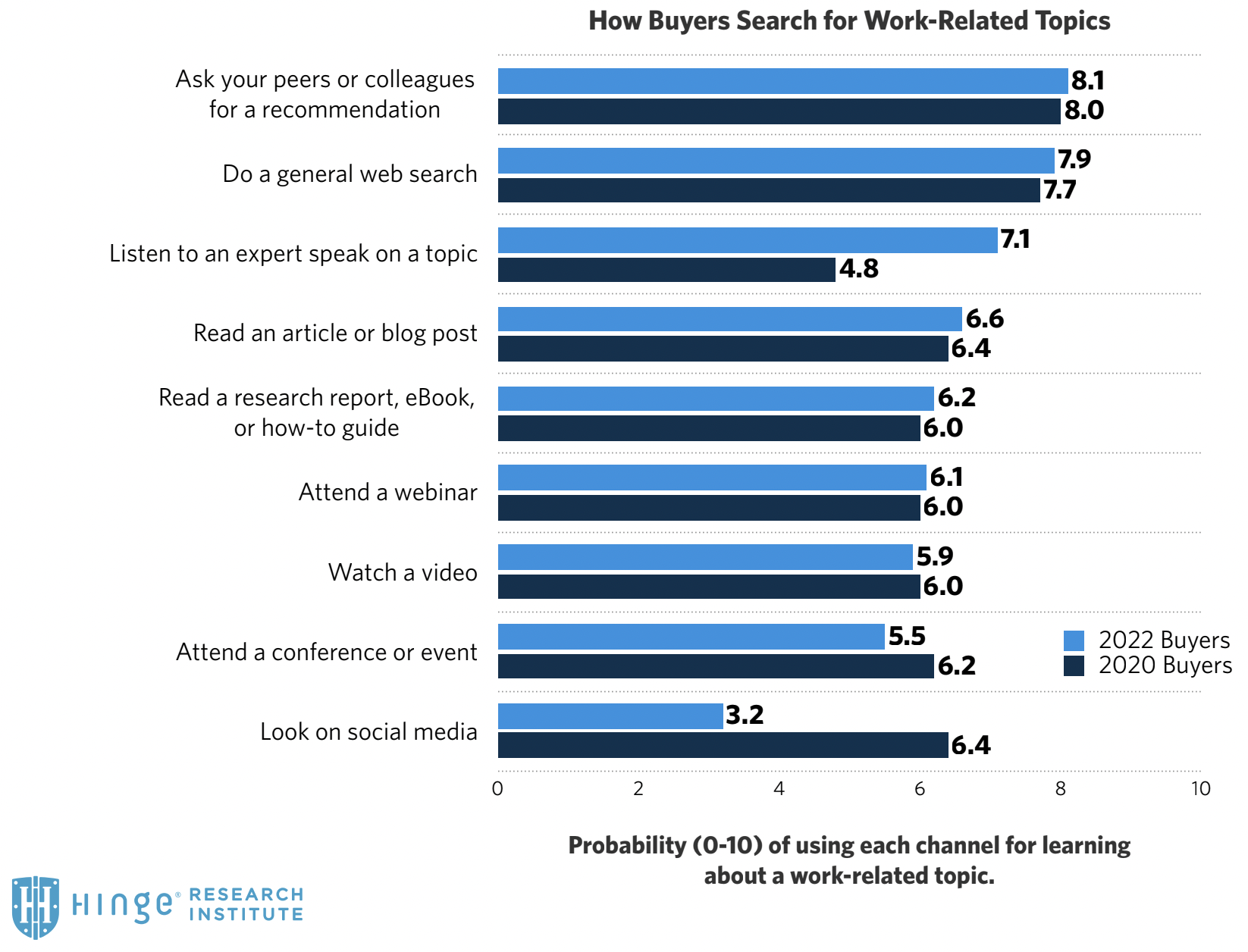

We conducted a survey of over 1,000 buyers of professional services to understand how purchasers research business problems—and ultimately the firms that can solve them—in today’s marketplace. We found that the second most common way professional services buyers check out firms is online.

7. Online marketing allows you to be everywhere your clients look

Today, it’s important for you to be where your potential clients are looking. More and more, that means your firm needs a robust and diversified presence online. From our research, we found that purchasers are looking for experts online in a wide range of places, including in search engines, online reviews, social media, webinars, and more. If you can determine where your buyers look online, you can build visibility in those channels. You can conduct research on your target audience to learn exactly what they read and where they spend their time online consuming business-related information.

Many clients still ask their peers to recommend service providers—but once they’ve learned a bit about you, you have to be easy to find when they search for you online. If you don’t have an online marketing initiative, potential clients will go looking for more information about you—and they might not find you. You may be surprised how many firms are difficult to find online, even when a person searches on their exact name (this is called a branded search). Online marketing can address this shortcoming.

8. You can use online marketing to reach influencers and “invisible” prospects

There are many people who influence the selection process, even if they might not be the final decision-makers. Some of these individuals may be professionals within your target firms, while others may be well-respected industry figures.

By the same token, you may have unrecognized or “invisible” prospects out there of whom you’re simply not aware. You know that certain firms would be a good match for your services, but there are many others who are equally promising but you aren’t aware of them. They may be in another part of the country or world, don’t participate in the same industry events, or just haven’t crossed paths with you.

When you deploy a content marketing strategy, these invisible prospects can find you, even if you don’t find them. For instance, publishing blog posts on a particular set of challenges or opportunities—and optimizing those posts for the right keyword phrases—allows you to “plant a flag” in the topic, helping the right audiences find your work and your firm.

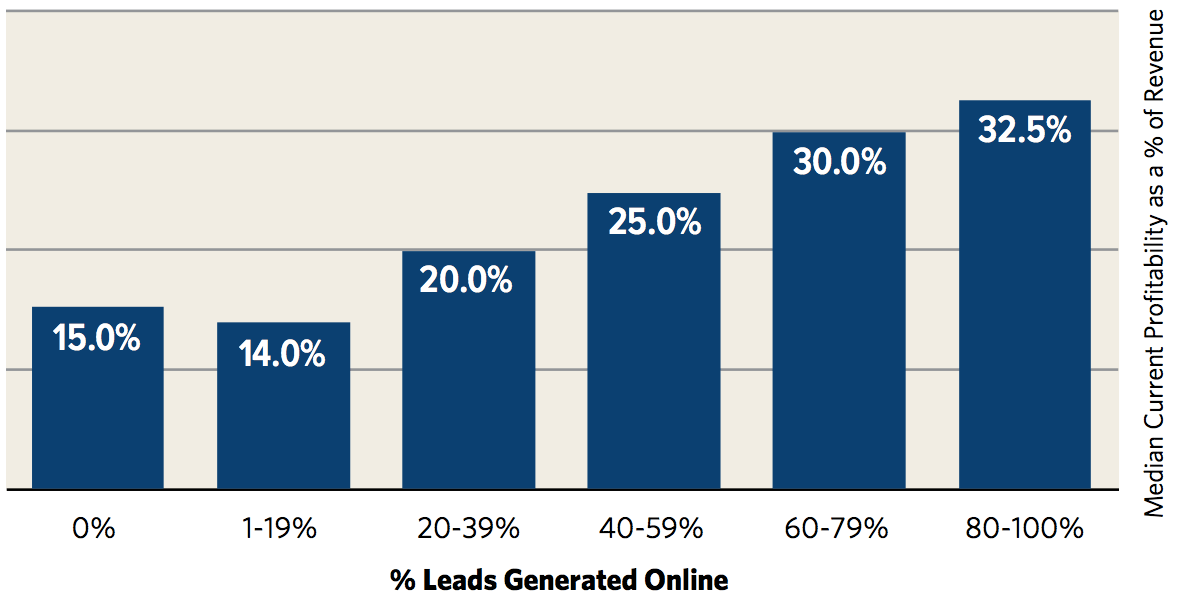

9. Firms that generate leads online achieve greater profits

Our in-depth studies of lead generation strategies for professional services firms have found that firms with online marketing programs are more profitable, on average, than those that do not. There is a strong correlation between the percentage of digital leads a firm generates and profitability.

Up to about twenty percent of leads generated online, profitability is relatively flat. But above twenty percent, profitability begins to rise steadily along with the percentage of leads generated online.

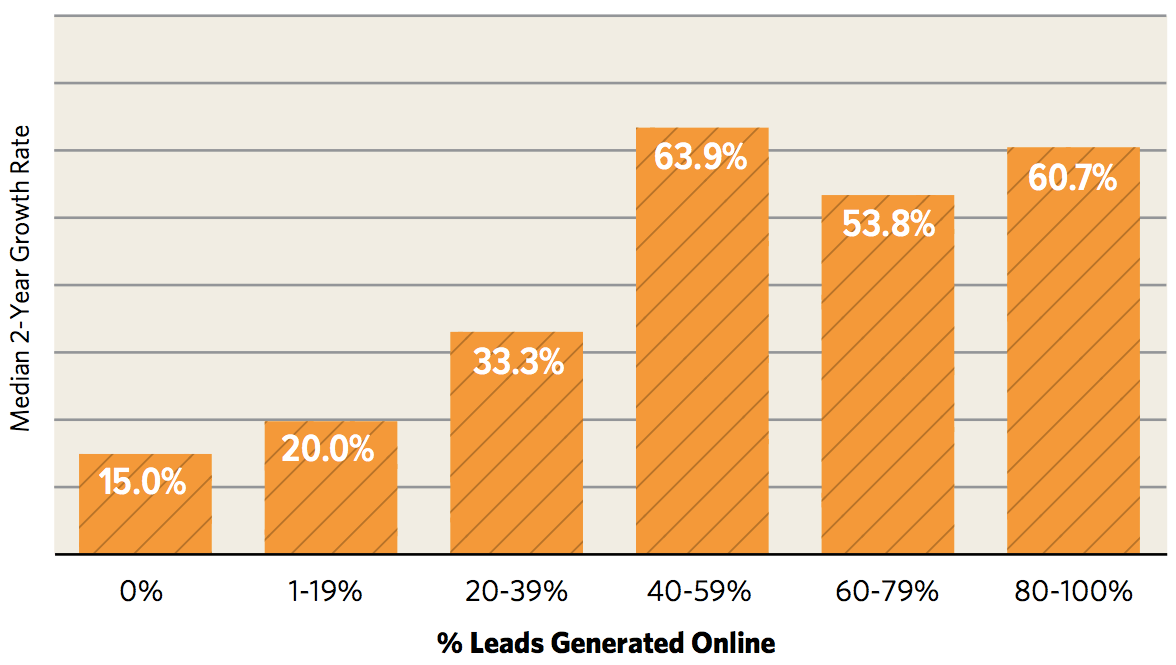

10. Firms that generate leads online achieve faster growth

Our research also shows firms that generate leads online grow at a faster rate.

We found that firms’ growth rates rise along with the proportion of leads generated online, peaking at about fifty percent. This data suggests that the most efficient marketing program—at least in terms of producing growth—is one that includes a roughly equal balance of online and traditional marketing techniques.

Conclusion

Online marketing provides a suite of powerful tools to help expand your firm’s reach and reputation—and grow your revenues year after year. Building a marketing program that includes powerful online marketing through tools such as content marketing, social media, email marketing, digital advertising, and online video, you can create a powerful lead-generating machine that puts your firm on the path to greater profitability and success.

How Hinge Can Help

Your B2B website should be one of your firm’s greatest assets. Our High Performance Website Program helps firms drive online engagement and leads through valuable content. Hinge can create the right website strategy and design to take your firm to the next level.

Additional Resources

- For more hands-on help on becoming the next Visible Firm®, register for our Visible Firm® course through Hinge University.

- Understand your buyers. Win more business. Read the latest findings from Inside the Buyer’s Brain, Fourth Edition, the biggest study of professional services buyers to date. It’s free!

- Ensure that your website and content gets found online with Hinge’s SEO Guide for Professional Services.